A board for sharing AI news and analysis.

The History of Hardware and Machine Intelligence

Author

김 경진

Date

2026-02-26 23:22

Views

38

A History of Intelligence That Began with Gears

In 1837, Charles Babbage interlocked brass gears and attempted to compute. One calculation per second. That was the first thought humanity ever entrusted to a machine.

A hundred years later, vacuum tubes arrived. Electrons flowed through glass tubes, performing 5,000 calculations per second. Five thousand times faster than gears—yet they filled entire rooms and radiated tremendous heat. In the 1950s, silicon transistors took their place. Fingernail-sized devices capable of 100,000 operations per second. The problems of heat and space shrank at once.

It did not stop there. In the 1970s, CPU chips reached one billion operations per second. In the 2000s, GPUs hit ten trillion. By the 2020s, data centers achieved one exaflop per second. Compared to Babbage’s gears, that is one quadrillion times faster.

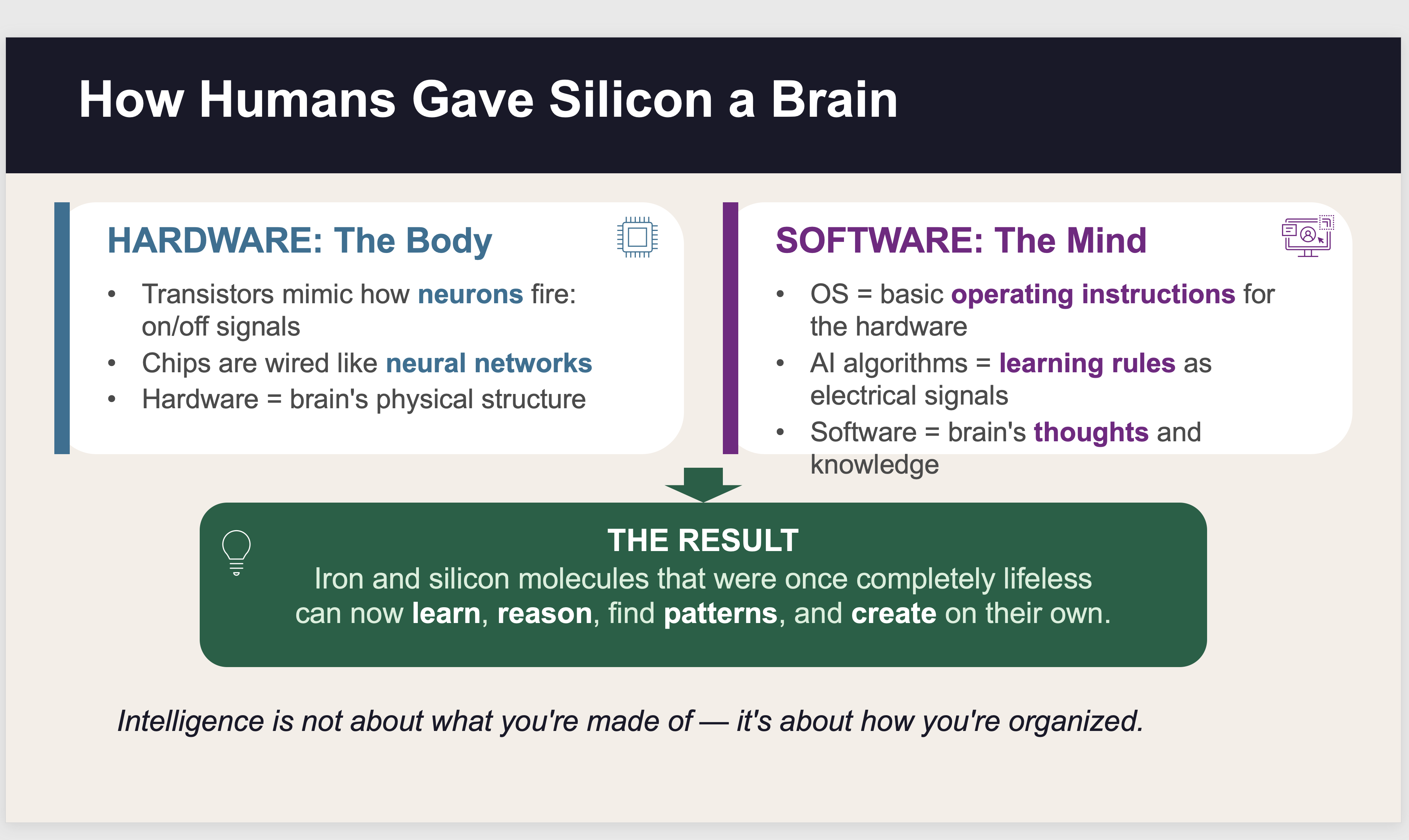

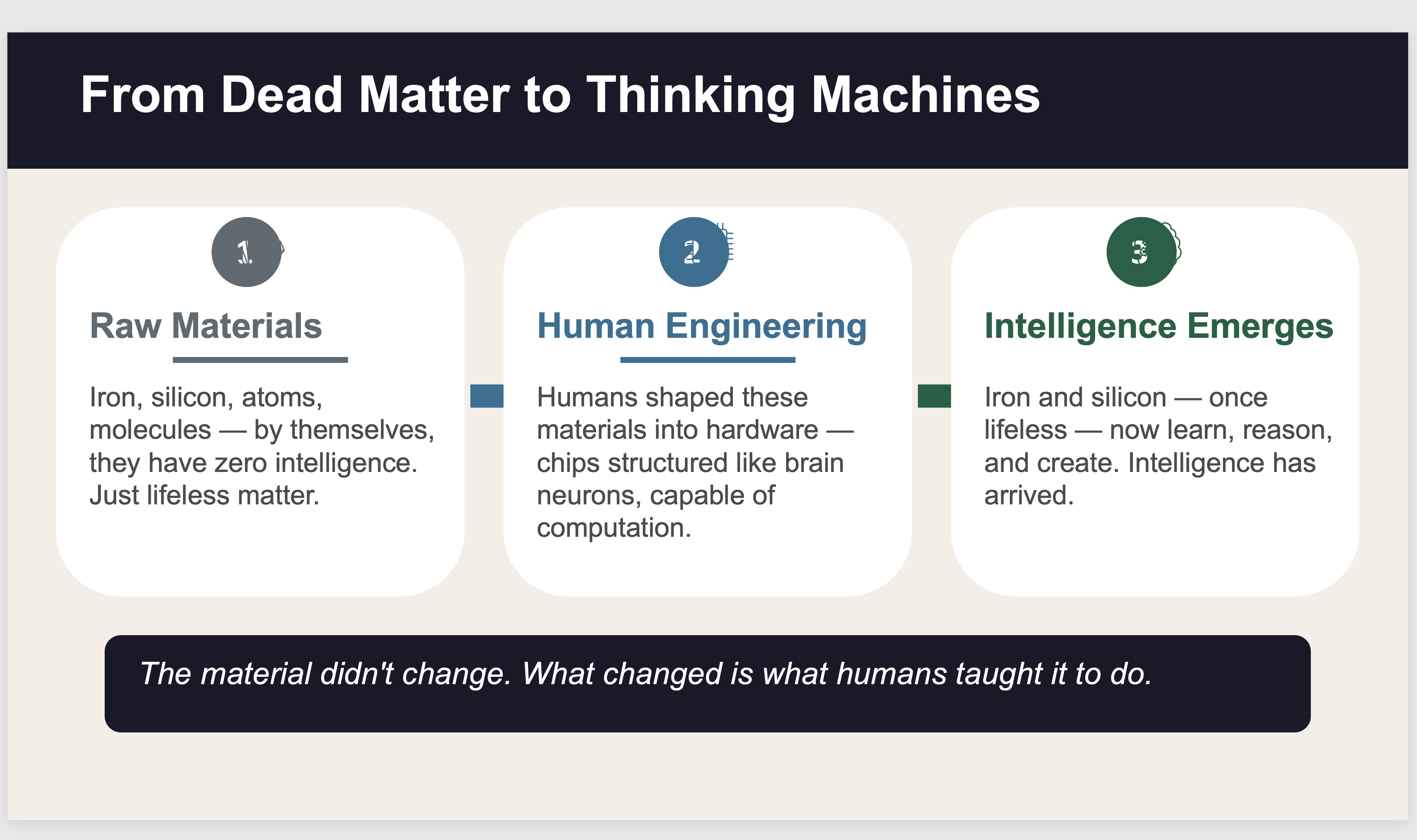

The fascinating point is that the materials themselves never changed. Iron and silicon—those atoms and molecules—existed on Earth from the beginning. On their own, they possessed no intelligence whatsoever. What humans did was arrange and structure those materials. Transistors mimicked the way neurons in the brain send and receive signals, and the circuits on chips were wired like neural networks.

The human brain has 86 billion neurons connected by 100 trillion synapses, operates on electrochemical signals, and consumes just 20 watts of energy. Semiconductor chips have billions of transistors that function through electrical on/off signals, adjusting neural network weights to learn—but they consume over 300 watts. The principle is the same; the material is different.

If hardware is the body, software is the mind. The operating system sets the basic rules of operation, and AI algorithms convert the rules of learning into electrical signals. As a result, iron and silicon molecules that were once completely lifeless have become entities that learn, reason, find patterns, and even create on their own.

The history of computing is the history of mastering the switch—fitting more computational power into ever smaller spaces. At the end of that journey, intelligence was born.

In Genesis, it is written that God formed the body of man from the dust of the ground and breathed into his nostrils the breath of life, and man became a living being. There is a resemblance. From iron and silicon mined from the earth, humans shaped circuit structures suited for thought and reasoning, then breathed into them the diverse algorithms that infer, discover patterns, and generate on their own. As a lump of clay became a person, a lump of mineral became a thinking machine. What the Creator once did to dust, the created—humans—are now doing to silicon.

The slides above are part of lecture materials I am preparing to teach AI in English to international students studying in Korea starting next week. As I work on the lecture notes, various reflections come to mind.

#ArtificialIntelligence #AIHistory #HardwareEvolution #ComputingRevolution #Transistor #Semiconductor #GPU #NeuronsAndChips #SiliconBrain #Babbage #VacuumTube #AIHardware #DataCenter #GenesisAndAI #KimKyungjinAI